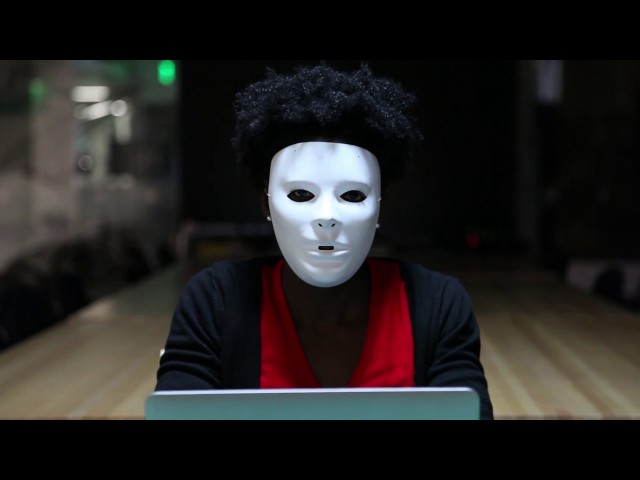

If you wanted to sum up racial bias in AI algorithms in one iconic moment, just take a few seconds of footage from the Netflix documentary Coded Bias. Minutes into the film, MIT researcher Joy Buolamwini discovers that the facial recognition software she installed on her computer wouldn’t recognize her dark-skinned face until she put on a white mask. Released in January 2020, the film introduced the ways algorithmic bias has infiltrated every aspect of our lives, from sorting resumes for job applications, allocating social services, deciding who sees advertisements for housing, and who is approved for a mortgage.

More importantly, the film served as a rude awakening to the notion that machines aren’t neutral, that AI bias is “a form of computationally imposed ideology, rather than an unfortunate rounding error,” writes Janus Rose, a senior editor at VICE. Worse still, many of these algorithms are “black boxes,” machine learning models whose inner workings are largely unknown even to their creators because of their immense complexity.

“I am thrilled that these documentaries are out there and people are talking about it because algorithmic literacy is another huge part of it,” said Alison Cossette, a data scientist at NPD group and a mentor at Springboard. “We need for the general public to understand what algorithms do and don’t do.”

What are black-box algorithms?

Allowing black-box algorithms to make major decisions on our behalf is a symptom of a larger problem: we mistakenly believe that big data yields reliable and objective truths if only we can extract them using machine learning tools—what Microsoft researcher Kate Crawford calls “data fundamentalism.”

Researchers at the University of Georgia discovered that people are more likely to trust answers generated by an algorithm than one from their fellow humans. The study also found that as the problems became more difficult, participants were more likely to rely on the advice of an algorithm than a crowdsourced evaluation.

These findings played out in the real world in May 2020, when numerous U.S. government officials relied on a pandemic-prediction model by the Federal Emergency Management Agency to decide when and how to lift stay-at-home orders and allow businesses to reopen, defying the counsel of the Centers for Disease Control and scores of health experts. In fact, that same model predicted in July 2019 that a nationwide pandemic would overwhelm hospitals and result in an economic shutdown.

Thanks to well-documented instances where algorithms outperformed humans, people have a tendency to overestimate AI capabilities just because machines can beat us at Jeopardy!, defeat world chess champions and transcribe audio with fewer mistakes than humans. But when it comes to tasks that require human judgment, common sense, and ethical sensibilities, the AI is exposed for what it really is: a series of mathematical equations that search for correlations between data points without inferring meaningful causal relationships.

“We interact with the real world and our survival depends on us understanding the world; AI does not,” said Hobson Lane, co-founder of Tangible AI and a Springboard mentor. “It just depends on being able to mimic the words coming out of a human’s mouth, and that’s a completely different type of knowledge about the world.”

From a survival standpoint, a world where algorithms curate our social media feeds, music playlists, and the ads we see doesn’t necessarily pose an immediate existential threat, but big decisions about people’s lives are increasingly made by software systems and algorithms.

“No matter what model you are building, someone is making either a policy or financial decision based upon that insight,” said Cosette. “Even if it’s a B2B situation or it’s internal, at some point it rolls out to societal impact.”

Get To Know Other Data Science Students

Leoman Momoh

Senior Data Engineer at Enterprise Products

Jonathan Orr

Data Scientist at Carlisle & Company

Abby Morgan

Data Scientist at NPD Group

When can black-box algorithms become dangerous?

An overreliance on these algorithms can destroy people’s livelihoods, violate their privacy, and perpetuate racism. The potential for algorithms to perpetuate cycles of oppression by excluding certain groups on the basis of race, gender, and other protected attributes is known as technological redlining, derived from real estate redlining, the practice of racial segregation in housing.

In Coded Bias, a celebrated teacher was fired after receiving a low rating from an algorithmic assessment tool and a group of tenants in a predominantly black neighborhood in Brooklyn campaigned against their landlord after the installation of a facial recognition system in their building.

When it comes to the U.S. correctional system, algorithms turn sentencing decisions into a literal game of Russian roulette. Risk assessment models used in courtrooms across the country evaluate a defendant’s risk of reoffending, spitting out scores that prosecutors use to determine a defendant’s bond amount, the length of their sentence, the likelihood of parole, and their risk of recidivism upon release.

In 2016, investigative news outlet ProPublica obtained the risk scores of over 7,000 people arrested in Broward County, Florida, in 2013 and 2014 and checked to see how many were charged with new crimes over the next two years, the same benchmark used by creators of the algorithm. Not only were the scores unreliable–only 20% of the people predicted to commit violent crimes actually did so–but journalists uncovered significant racial disparities.

The formula was particularly likely to flag Black defendants as future criminals and incorrectly labeled them at almost twice the rate as white defendants. In fact, Black people were 77% more likely to be flagged as high risk of committing a future violent crime, and 45% more likely to commit a future crime of any kind.

Can model interpretability provide the answer?

The root of the evil is how hard it can be to understand how these machine learning algorithms arrive at certain computations. “Black box” machine learning models are created directly from the data by an algorithm, meaning that even the humans who design them cannot precisely interpret how the model makes predictions, other than observing the inputs and outputs. In other words, the actual decision process can be entirely opaque.

To better understand this, consider how a neural network functions. Algorithms are essentially a set of step-by-step instructions or operations that a computer carries out in order to make predictions. After the algorithm trains on a set of test data, it generates a new set of rules (a series of supplementary algorithms) based on the results of those tests. The process repeats again and again until the algorithm reaches its optimal state (i.e. the highest possible accuracy). However, it’s impossible to fully understand the relative weights a neural network assigns to all the different variables.

For example, let’s say you’re training a neural network to recognize images of sheep, so you train the algorithm on an image dataset of sheep on green pastures. The model might incorrectly assume that the green image pixels are a defining characteristic of sheep, and go on to classify any other object in a grassy setting as such.

Model interpretability enables data scientists to test the causality of the features, adjust the dataset and debug the model accordingly. For example, you can alter the data by adding images with different backgrounds or simply cropping out the background. Deep learning models are prone to learning the most discriminative features, even if they’re the wrong ones. In a production setting, a data scientist may be pressured to select the algorithm with the highest accuracy, even if the training data may have been problematic.

“When the drive is for accuracy, it’s not unthinkable that people are going to game the algorithm so that it looks good,” said Cossette. “If you’re in a Kaggle competition, you want the highest accuracy, and it doesn’t matter in reality if there’s some other sort of impact.”

Most machine learning models are not designed with interpretability constraints in mind. Instead, they are intended to be accurate predictors on a static dataset that may or may not accurately represent real-world conditions.

“Many people do not realize that the problem is often with the data, as opposed to what machine learning does with the data,” Rich Caruana, a senior researcher at Microsoft, said at a 2017 annual meeting of the American Association for the Advancement of Science. “It depends on what you are doing with the model and whether the data is used in the right way or the wrong way.”

Caruana had recently worked on a pneumonia risk model that determined a pneumonia patient’s risk of dying from the disease, and therefore who should be admitted to the hospital. On the basis of the patient data, the model found that those with a history of asthma have a lower risk of dying from pneumonia, when in fact asthma is a well-known risk factor of pneumonia. The model had made that prediction based on the fact that patients with asthma get access to healthcare faster, and therefore concluded that they have a decreased mortality risk.

The misconception that accuracy must be sacrificed for interpretability has allowed companies to market and sell proprietary black-box models for high-stakes decisions when simple interpretable models exist for the same tasks.

“Consumers are vastly more aware of these issues especially as they entangle with data privacy concerns,” said Ayodele Odubela, a data science career coach and responsible AI educator with Kforce at Microsoft. “My organization, Ethical AI Champions, is working on educational tools for engineers in high-risk development teams as well as taking over the marketing aspect of AI products to speak more accurately about algorithm limitations and setting the right expectations for customers and consumers.”

The explainable AI solution

Explainable AI, often abbreviated as ‘XAI,’ is a blanket term for the mainstreaming of interpretable algorithms whose computations can be understood by its creators and users, like showing your work in a math problem.

“I think there are two layers to explainable AI,” said Cossette. “There’s the interpretability of the algorithm itself–which is, why did this thing spit out this answer? Then there are the deep layers and the training data, and how those two things affect the outcome.”

One way to advocate for transparency in machine learning is by only using algorithms that are interpretable. Functions like linear regression, logistic regression, decision trees, and other linear regression extensions can be understood by mathematical formulas and the weights (coefficients) of the variables.

For example, let’s take a hypothetical situation where a business uses a linear regression equation to determine starting salary for its junior employees based on years of work experience and GPA. The equation would look like this:

Salary= W1*experience + W2*GPA

We have an interpretable linear equation where the weighting can tell us which variable is the stronger determinant of salary–experience or GPA. Decision trees are also interpretable because we can see how decisions are being taken starting from the root node to the leaf node. However, models become harder to interpret when training a deep decision tree for a depth of eight or nine, where there are too many decision rules to present effectively in a flow diagram. In this case, you can use feature importance to interpret the importance of each feature at a global level.

While convolutional neural networks (CNN)–used for things like object classification and detection–were once unassailable enigmas due to having hundreds of convolutional layers, where each layer learns filters of increasing complexity, researchers are discovering ways to make them interpretable. One way to do this when building a CNN for image recognition is to train the filters to recognize object parts rather than simple textures–thereby giving the model a clearly defined set of object features to recognize. In the example given by the researchers, a cat would be recognized by its feline facial features rather than its paws, ears or snout, which are present in other animals. This would more closely mimic how humans recognize and classify objects–the ultimate goal of computer vision.

“Algorithms find things in images that the human eye doesn’t find because it can measure the pixel value to extremely high precision, and so it sees some slight pattern in all the pixels,” said Lane. “You have to be really careful about randomizing not only how you generate data, but how you create and label the data–especially with a neural network.”

Interpretable models are important because people need to be able to trust algorithms that make decisions on their behalf. From an ethical standpoint, fairness is a big part of the equation. AI is only as good as the data it’s trained on, and datasets are often fraught with biases for two reasons: the data points reflect real-world conditions of racial and gender discrimination, or, the people who create the algorithms don’t represent the diverse interests and viewpoints of the general population.

In 2018, Amazon scrapped its in-house applicant tracking system because it didn’t like resumes containing the word “women,” so if an applicant listed “mentoring women entrepreneurs” as one of their achievements, they were out of luck. The system had been trained to vet applicants by observing patterns in resumes submitted to the company over a 10-year period, the majority of which had come from men—a reflection of the female talent shortage in the tech industry.

Datasets can also become less accurate over time as social and political circumstances evolve, causing the algorithm’s accuracy to erode due to data obsolescence.

Katie He, a student enrolled in Springboard’s Machine Learning Engineering Career Track, recently encountered this problem while building a machine learning model that would colorize footage using training data from a TV show that aired in the 1970s.

“The skin tone for the actors and actresses was quite homogeneous in the training set because it is a show from the seventies after all,” said He. “So if the model were to colorize footage from a newer TV show, everybody’s face would have the same skin tone, which might not be appropriate.”

Interpretability is necessary except in situations where the algorithmic decision does not directly impact the end-user, such as algorithms that refine internal business processes. Examples include algorithms that perform sentiment analysis to classify call recordings or use machine learning to track mentions of the brand on social media.

Algorithmic auditing and the future of transparency in machine learning

Third parties perform audits to evaluate a business’s regulatory compliance, environmental impact, and process efficiency in order to protect investors from fraud–but the algorithms that directly impact people’s lives are rarely audited. Some companies perform their own audits by evaluating algorithms for bias before and after production, asking questions like: “Is this sample of data representative of reality?” or “Is the algorithm suitably transparent to end-users?”

Bias can appear at so many different junctures of the development pipeline, from data wrangling to training the model, making it necessary to develop strategies to evaluate each aspect of a model for undue sources of influence. Another important aspect to consider is how the model’s accuracy will erode over time as real-world conditions evolve.

“In general, every model will decrease in accuracy over time,” said Cossette. “The nature of the world is, data is always changing, things are always changing. So your models will always continue to get worse. So how are you monitoring and managing them?”

Some companies in the AI governance space are contracted by other companies to find and rectify bias in algorithms. Basis AI, a Singapore-based consultancy for responsible AI, serves as a cyber auditor of AI algorithms by making their decision-making processes more transparent and helping data scientists understand when an algorithm might be biased. The company recently partnered with Facebook for the Open Loop initiative to mentor 12 startups in the Asia-Pacific region to prototype ethical and efficient algorithms.

There is a growing field of private auditing firms that review algorithms on behalf of other companies, particularly those that have been criticized for biased outcomes, but it’s unclear if these efforts are truly altruistic or simply done for PR purposes.

Recently, a high-profile case of algorithmic bias put the importance of algorithmic auditing in the spotlight. HireVue, a hiring software company that provides video interview services for major companies like Walmart and Goldman Sachs, was accused of having a biased algorithm, which evaluates people’s facial expressions as a proxy for forecasting job performance. The auditing firm didn’t turn up any biases in the algorithm, according to a press release from HireVue. However, HireVue was accused of using the audit as a PR stunt because the audit only examined a hiring test for early-career candidates, rather than its candidate evaluation process as a whole.

Considering how influential GDPR has been on privacy-focused initiatives, it wouldn’t be surprising to see laws that mandate algorithmic placed on businesses in developed countries that already have strong data protection laws.

“We need to insist on addressing power imbalances and how we can implement AI in more responsible ways within all types of businesses, from startups to enterprises,” said Odubela.

Companies are no longer just collecting data. They’re seeking to use it to outpace competitors, especially with the rise of AI and advanced analytics techniques. Between organizations and these techniques are the data scientists – the experts who crunch numbers and translate them into actionable strategies. The future, it seems, belongs to those who can decipher the story hidden within the data, making the role of data scientists more important than ever.

In this article, we’ll look at 13 careers in data science, analyzing the roles and responsibilities and how to land that specific job in the best way. Whether you’re more drawn out to the creative side or interested in the strategy planning part of data architecture, there’s a niche for you.

Is Data Science A Good Career?

Yes. Besides being a field that comes with competitive salaries, the demand for data scientists continues to increase as they have an enormous impact on their organizations. It’s an interdisciplinary field that keeps the work varied and interesting.

10 Data Science Careers To Consider

Whether you want to change careers or land your first job in the field, here are 13 of the most lucrative data science careers to consider.

Data Scientist

Data scientists represent the foundation of the data science department. At the core of their role is the ability to analyze and interpret complex digital data, such as usage statistics, sales figures, logistics, or market research – all depending on the field they operate in.

They combine their computer science, statistics, and mathematics expertise to process and model data, then interpret the outcomes to create actionable plans for companies.

General Requirements

A data scientist’s career starts with a solid mathematical foundation, whether it’s interpreting the results of an A/B test or optimizing a marketing campaign. Data scientists should have programming expertise (primarily in Python and R) and strong data manipulation skills.

Although a university degree is not always required beyond their on-the-job experience, data scientists need a bunch of data science courses and certifications that demonstrate their expertise and willingness to learn.

Average Salary

The average salary of a data scientist in the US is $156,363 per year.

Data Analyst

A data analyst explores the nitty-gritty of data to uncover patterns, trends, and insights that are not always immediately apparent. They collect, process, and perform statistical analysis on large datasets and translate numbers and data to inform business decisions.

A typical day in their life can involve using tools like Excel or SQL and more advanced reporting tools like Power BI or Tableau to create dashboards and reports or visualize data for stakeholders. With that in mind, they have a unique skill set that allows them to act as a bridge between an organization’s technical and business sides.

General Requirements

To become a data analyst, you should have basic programming skills and proficiency in several data analysis tools. A lot of data analysts turn to specialized courses or data science bootcamps to acquire these skills.

For example, Coursera offers courses like Google’s Data Analytics Professional Certificate or IBM’s Data Analyst Professional Certificate, which are well-regarded in the industry. A bachelor’s degree in fields like computer science, statistics, or economics is standard, but many data analysts also come from diverse backgrounds like business, finance, or even social sciences.

Average Salary

The average base salary of a data analyst is $76,892 per year.

Business Analyst

Business analysts often have an essential role in an organization, driving change and improvement. That’s because their main role is to understand business challenges and needs and translate them into solutions through data analysis, process improvement, or resource allocation.

A typical day as a business analyst involves conducting market analysis, assessing business processes, or developing strategies to address areas of improvement. They use a variety of tools and methodologies, like SWOT analysis, to evaluate business models and their integration with technology.

General Requirements

Business analysts often have related degrees, such as BAs in Business Administration, Computer Science, or IT. Some roles might require or favor a master’s degree, especially in more complex industries or corporate environments.

Employers also value a business analyst’s knowledge of project management principles like Agile or Scrum and the ability to think critically and make well-informed decisions.

Average Salary

A business analyst can earn an average of $84,435 per year.

Database Administrator

The role of a database administrator is multifaceted. Their responsibilities include managing an organization’s database servers and application tools.

A DBA manages, backs up, and secures the data, making sure the database is available to all the necessary users and is performing correctly. They are also responsible for setting up user accounts and regulating access to the database. DBAs need to stay updated with the latest trends in database management and seek ways to improve database performance and capacity. As such, they collaborate closely with IT and database programmers.

General Requirements

Becoming a database administrator typically requires a solid educational foundation, such as a BA degree in data science-related fields. Nonetheless, it’s not all about the degree because real-world skills matter a lot. Aspiring database administrators should learn database languages, with SQL being the key player. They should also get their hands dirty with popular database systems like Oracle and Microsoft SQL Server.

Average Salary

Database administrators earn an average salary of $77,391 annually.

Data Engineer

Successful data engineers construct and maintain the infrastructure that allows the data to flow seamlessly. Besides understanding data ecosystems on the day-to-day, they build and oversee the pipelines that gather data from various sources so as to make data more accessible for those who need to analyze it (e.g., data analysts).

General Requirements

Data engineering is a role that demands not just technical expertise in tools like SQL, Python, and Hadoop but also a creative problem-solving approach to tackle the complex challenges of managing massive amounts of data efficiently.

Usually, employers look for credentials like university degrees or advanced data science courses and bootcamps.

Average Salary

Data engineers earn a whooping average salary of $125,180 per year.

Database Architect

A database architect’s main responsibility involves designing the entire blueprint of a data management system, much like an architect who sketches the plan for a building. They lay down the groundwork for an efficient and scalable data infrastructure.

Their day-to-day work is a fascinating mix of big-picture thinking and intricate detail management. They decide how to store, consume, integrate, and manage data by different business systems.

General Requirements

If you’re aiming to excel as a database architect but don’t necessarily want to pursue a degree, you could start honing your technical skills. Become proficient in database systems like MySQL or Oracle, and learn data modeling tools like ERwin. Don’t forget programming languages – SQL, Python, or Java.

If you want to take it one step further, pursue a credential like the Certified Data Management Professional (CDMP) or the Data Science Bootcamp by Springboard.

Average Salary

Data architecture is a very lucrative career. A database architect can earn an average of $165,383 per year.

Machine Learning Engineer

A machine learning engineer experiments with various machine learning models and algorithms, fine-tuning them for specific tasks like image recognition, natural language processing, or predictive analytics. Machine learning engineers also collaborate closely with data scientists and analysts to understand the requirements and limitations of data and translate these insights into solutions.

General Requirements

As a rule of thumb, machine learning engineers must be proficient in programming languages like Python or Java, and be familiar with machine learning frameworks like TensorFlow or PyTorch. To successfully pursue this career, you can either choose to undergo a degree or enroll in courses and follow a self-study approach.

Average Salary

Depending heavily on the company’s size, machine learning engineers can earn between $125K and $187K per year, one of the highest-paying AI careers.

Quantitative Analyst

Qualitative analysts are essential for financial institutions, where they apply mathematical and statistical methods to analyze financial markets and assess risks. They are the brains behind complex models that predict market trends, evaluate investment strategies, and assist in making informed financial decisions.

They often deal with derivatives pricing, algorithmic trading, and risk management strategies, requiring a deep understanding of both finance and mathematics.

General Requirements

This data science role demands strong analytical skills, proficiency in mathematics and statistics, and a good grasp of financial theory. It always helps if you come from a finance-related background.

Average Salary

A quantitative analyst earns an average of $173,307 per year.

Data Mining Specialist

A data mining specialist uses their statistics and machine learning expertise to reveal patterns and insights that can solve problems. They swift through huge amounts of data, applying algorithms and data mining techniques to identify correlations and anomalies. In addition to these, data mining specialists are also essential for organizations to predict future trends and behaviors.

General Requirements

If you want to land a career in data mining, you should possess a degree or have a solid background in computer science, statistics, or a related field.

Average Salary

Data mining specialists earn $109,023 per year.

Data Visualisation Engineer

Data visualisation engineers specialize in transforming data into visually appealing graphical representations, much like a data storyteller. A big part of their day involves working with data analysts and business teams to understand the data’s context.

General Requirements

Data visualization engineers need a strong foundation in data analysis and be proficient in programming languages often used in data visualization, such as JavaScript, Python, or R. A valuable addition to their already-existing experience is a bit of expertise in design principles to allow them to create visualizations.

Average Salary

The average annual pay of a data visualization engineer is $103,031.

Resources To Find Data Science Jobs

The key to finding a good data science job is knowing where to look without procrastinating. To make sure you leverage the right platforms, read on.

Job Boards

When hunting for data science jobs, both niche job boards and general ones can be treasure troves of opportunity.

Niche boards are created specifically for data science and related fields, offering listings that cut through the noise of broader job markets. Meanwhile, general job boards can have hidden gems and opportunities.

Online Communities

Spend time on platforms like Slack, Discord, GitHub, or IndieHackers, as they are a space to share knowledge, collaborate on projects, and find job openings posted by community members.

Network And LinkedIn

Don’t forget about socials like LinkedIn or Twitter. The LinkedIn Jobs section, in particular, is a useful resource, offering a wide range of opportunities and the ability to directly reach out to hiring managers or apply for positions. Just make sure not to apply through the “Easy Apply” options, as you’ll be competing with thousands of applicants who bring nothing unique to the table.

FAQs about Data Science Careers

We answer your most frequently asked questions.

Do I Need A Degree For Data Science?

A degree is not a set-in-stone requirement to become a data scientist. It’s true many data scientists hold a BA’s or MA’s degree, but these just provide foundational knowledge. It’s up to you to pursue further education through courses or bootcamps or work on projects that enhance your expertise. What matters most is your ability to demonstrate proficiency in data science concepts and tools.

Does Data Science Need Coding?

Yes. Coding is essential for data manipulation and analysis, especially knowledge of programming languages like Python and R.

Is Data Science A Lot Of Math?

It depends on the career you want to pursue. Data science involves quite a lot of math, particularly in areas like statistics, probability, and linear algebra.

What Skills Do You Need To Land an Entry-Level Data Science Position?

To land an entry-level job in data science, you should be proficient in several areas. As mentioned above, knowledge of programming languages is essential, and you should also have a good understanding of statistical analysis and machine learning. Soft skills are equally valuable, so make sure you’re acing problem-solving, critical thinking, and effective communication.

Since you’re here…Are you interested in this career track? Investigate with our free guide to what a data professional actually does. When you’re ready to build a CV that will make hiring managers melt, join our Data Science Bootcamp which will help you land a job or your tuition back!