Aspiring data specialists should always be on the lookout to get their hands dirty exploring different publicly available data sets. However, finding one to use for practicing a certain skill or tool can be confusing.

Knowing what to look for depending on which skill you want to practice is an integral first step that will set you up for success. This post will break down what to look for, what types of data sets are out there, and what makes one type of data set different from another when you practice your data science skills.

4 Methods for Data Analysis: A Quick Overview

Before we dive into the different types of datasets that are out there, it’s important to define a few methods of data analysis that you’ll come across in your day-to-day responsibilities. Different types of data sets provide different challenges to focus on. Here are a few to get you started.

-

Data Analysis

First and foremost, data analysis in and of itself is the usage of logical reasoning and statistical analysis with collected data in order to guide decision making or extract helpful conclusions. For successful and efficient data analysis, the best datasets available online use will be organized, thorough, and diverse. This introduces the opportunity for confident answers and interesting findings. More on this below.

-

Data Cleaning

Data cleaning is a process done before the analysis begins, and is an integral part of maintaining dataset integrity along with concise and focused analysis. The process requires identifying irrelevant and repeat data and understanding how to replace, improve, or delete these records. When practicing data cleaning, look out for information-rich datasets that offer multiple filtering options of what data to use or not.

-

Data Visualization

Equally important to the data itself is the ability for analysts to communicate findings–after all, these conclusions are what influence business decisions. When launching a new product or service, conclusions from data drive strategies around UI/UX Design, pricing models, and growth marketing spend. Data visualization is an important part of making these kinds of choices and involves creating visual assets that can represent any patterns or trends such as charts, graphs, or maps. Whether you’re a data analyst or a data scientist, having a strong understanding of data visualization is essential in making your goals visible to your team.

-

Machine Learning Analysis

Machine learning (a subset of data science) is a key concept to working with data so that systems can gain the ability to improve themselves and learn in real-time. Datasets that are well-equipped for ML analysis will always have a large number of data points: this is because you’ll need to make up a training data set to train your algorithm as well as a test set to evaluate the success. The dataset you choose should be carefully curated and diverse to ensure unique findings and the opportunity to extend the system’s knowledge. The most successful ML data projects should be dynamic and long-term, as well as frequently updated.

Get To Know Other Data Science Students

Jonas Cuadrado

Senior Data Scientist at Feedzai

Isabel Van Zijl

Lead Data Analyst at Kinship

Bret Marshall

Software Engineer at Growers Edge

How to Find the Perfect Data Set in 5 Steps

-

Step 1: Choose your focus

Before seeking out your next dataset, be sure to have your “why” top of mind. Think about the questions your team will be asking and the goals that you’ll set, such as:

- Are you trying to figure out what time of day customers are most likely to make a conversion? Are you analyzing a record of daily active users on your site?

- Are you exploring the engagement trends of your team’s app?

The goal of data analysis is to pull out useful information from data and use it in decision making. Keep that goal at the center of your project to stay motivated.

-

Step 2: Ensure you have the appropriate amount of data

Whatever set you work with should be rich enough to leave room for thorough data analysis. This involves the process of systematically applying statistical techniques to condense and extract answers from data. Try to aim for at least a few thousand rows, and at least 20 − 25 columns. On the other hand, your data set should never be too busy. If you’re finding yourself getting bogged down with unnecessary information, consider cleaning your data before beginning your analysis.

-

Step 3: Work with clean data

Data cleaning involves fixing or removing incorrect, corrupted, incorrectly formatted, duplicate, or incomplete data within a dataset. In some cases, data cleaning will involve combing through your data to read and recognize any outliers that don’t belong. You can practice data cleaning using software that uses algorithms or lookup tables to pinpoint any discrepancies to correct issues, dedupe data, and prepare it for analysis.

Best data cleaning tools

If you’re looking to transform data from one format into another, OpenRefine is an open-source data cleaning tool that can accommodate a few hundred thousand rows of data and give you access to a host of editing tools. Another popular tool used in data cleaning is Data Ladder. Rated the fastest and most accurate solution on the market, this tool is helpful in standardizing and preparing your information for other analytics strategies. Another benefit to Data Ladder is its ability to integrate with many other connectors you may be using in your business such as SAP, Salesforce, and more.

-

Step 4: Look for a diverse range of variables

Your dataset should have a mix of both continuous and categorical variables. Categorical variables may be divided into groups such as race, sex, and educational level. Continuous variables involve any data that would be impossible to count, as they go on forever. Examples include age, weight, and temperature. If you start with a dataset that has few columns which appear to be neither categorical nor continuous, data cleaning is a necessary next step. Too wide of a range introduces the possibility for overgeneralized conclusions.

Categorical Data Analysis

Analysis of categorical data generally will always involve the use of data tables. For example, suppose a survey was conducted of a group of 20 individuals, who were asked to identify their hair and eye color. A table could represent their responses where hair color is represented on the Y-axis and eye color is represented on the X-axis. The totals in each category account for the individuals in each without the effect of the other variable. Conclusions within categorical data are often represented by percentages; for example, let’s say of the 20 individuals 4 had red hair, this means 20% of the surveyors are redheads.

Continuous Data Analysis

With continuous data, strong insights can be made with far fewer data points, as it’s less expensive to gather and often yields a higher sensitivity in terms of how close to the target any conclusions will hit. A key advantage of continuous data is that it can be divided into finer and finer levels, allowing for this high sensitivity. For example, you can measure your height on scales of increasing precision, from meters to millimeters and beyond. Continuous data can also be used in hypothesis testing to predict accuracy with sample t-tests.

-

Step 5: Avoid busy data sets

Finding a balance between sparse and excessive comes down to overfitting and focus. If a dataset has too many variables, any reasoning pulled from it will be hard to connect with reality. If the dataset’s range is too wide, correlations will not be specific enough and therefore inapplicable. It all boils down to the strength of acceptability. If more data weakens the strength of your argument, examine your assumptions about what is relevant. A major indicator of a busy dataset is within pattern recognition. Choosing too many factors will lead to poor results, so focus on the quality and reliability of data to recognize when the set may have too much data.

Related Read: Understanding Data Wrangling + How (and When) It’s Used

3 Different Types of Datasets and Their Benefits

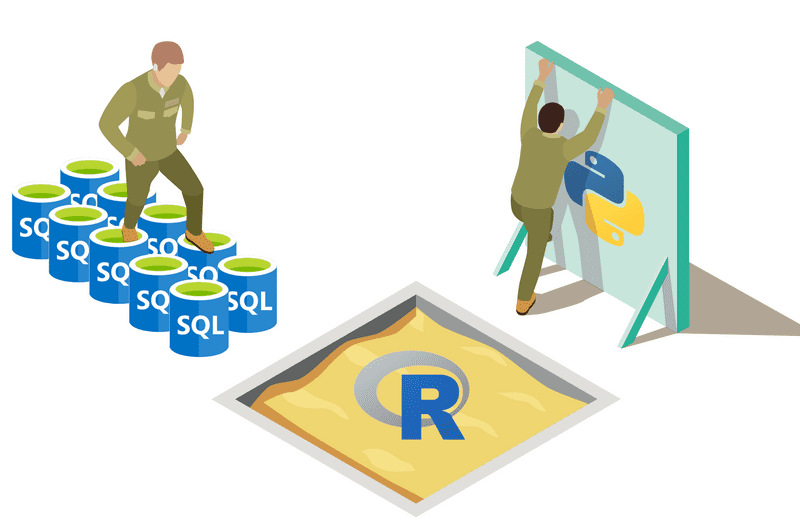

Different datasets have different requirements to work with them properly. For example, while graph-based datasets will need Tableau to create charts and visual assets, more temporal datasets will require geoprocessing tools to manage and organize the spatial data.

Additionally, you’ll frequently use SQL for interaction with multiple databases at once as well as relational databases. Having a solid grasp of the language will make it much easier to communicate your findings through intuitive dashboards that can serve as an intermediary between end-users and a more complex data storage system.

Being able to recognize distinguishing factors between datasets will sharpen your approach and lead to more accurate predictions and insights.

-

Record Datasets

Record data has no explicit relationship among records or data fields. Each object has the same set of attributes stored in flat files or relational databases. These types of databases are the most common, as data mining work typically assumes data is a collection of data objects. Record datasets such as the United States Census Data or this dataset from Instacart are best in terms of accessibility and simplicity—they’re easy to read and well-suited for practice for beginners.

Record datasets also provide a great platform to use more advanced techniques like Machine Learning to learn about the dataset and be able to predict and improve the program. Since record datasets are so straightforward, they’re great for using AI to sort through patterns and trends and form predictions. It’s important to note that other data like images, videos, and unstructured data can not be used in machine learning.

When it comes to ML, it’s important to make sure the dataset you use is high-quality, accurate, and frequently updated, as machine learning algorithms function through a process called inductive learning. This process forms models from training data in order to form generalizations and predictive analysis that can be applied to your dataset. Knowing how to use ML in data analysis is an extremely important skill today, as predictive analysis can have major real-world impacts—as evidenced in these COVID-19 projections.

-

Graph-Based Datasets

A graph-based dataset (GBD) uses structures and semantic queries to display and sort data. Data object relationships are found in the links between objects and link properties. For example, direction or weight. The structured nature of graph-based datasets allows the occurrence of sub-object relationships, which can be represented as graphs as well. The key benefit of using GBDs is that they lend themselves to an easy transition into data visualization. This type of dataset is a step up from a record dataset, for example, as it introduces an additional level of organization.

Graph-based datasets can be largely helpful in communicating data at large—and is especially effective in analyzing data that’s continuously being gathered. For example, this data uses graphs to represent the polling averages of the 2020 U.S. election. Similarly, this data set explores the primary debates to track common word combinations, who got the most applause and more all represented through different graphical analyses.

-

Ordered Datasets

Ordered data sets require a user-specified key for organization. They are kept in a physical sequence based on this chosen key and don’t require using a set. Ordered data sets such as this FBI public data set can be split according to the type of data, such as:

- Temporal data is an extension of record data, where each object has a time connection. For example, can be used to track crime patterns by time of day.

- Sequence data includes a sequence of individual entities. It’s similar to temporal data, but involves letters or numbers instead of time. (An everyday example of sequential data are gene sequences.)

- Time-series datasets blend the first two by involving a record as a series over time. You can find an example of a public ordered dataset that uses time-series data in the weekly returns of the Dow Jones Index. Another real-world application of sequential data can be found in this Bloomberg article that compares financial statistics with COVID-19 data. Within the article, there is an analysis of GDP and reported cases and how the numbers have fluctuated over time.

- Spatial data includes objects that have spatial attributes such as locations or areas. The biggest benefit of choosing an ordered dataset is the opportunity for easy-to-find, real-world applications, such as weather patterns in your city.

Since you’re here…

Curious about a career in data science? Experiment with our free data science learning path, or join our Data Science Bootcamp, where you’ll get your tuition back if you don’t land a job after graduating. We’re confident because our courses work – check out our student success stories to get inspired.