Data engineering represents a confluence between software engineering and data science, so it helps to have skills from each discipline. In fact, most data engineers start off as software engineers, given that data engineering relies heavily on programming.

What skills do you need to become a data engineer? Learn how to grow your data engineer skillset with this introductory guide.

What Are the Key Skills Needed for a Data Engineer Career?

Data engineers are expected to know how to build and maintain database systems, be fluent in programming languages such as SQL, Python, and R, be adept at finding warehousing solutions, and using ETL (Extract, Transfer, Load) tools, and understanding basic machine learning and algorithms.

A data engineer’s skillset should also consist of soft skills, including communication and collaboration. Data science is a highly collaborative field, and data engineers work with a range of stakeholders, from data analysts to CTOs.

Related Read: How To Become a Data Engineer

5 Essential Programs Data Engineers Use

Data engineers use the following five essential programs.

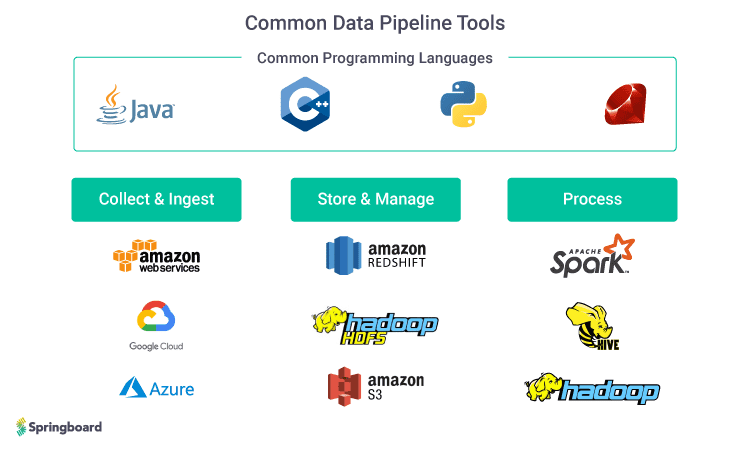

- Apache Hadoop and Apache Spark. The Apache Hadoop software library is a framework that allows for the distributed processing of large data sets across clusters of computers using simple programming models. It is designed to scale up from single servers to thousands of machines, each offering local computation and storage. The framework supports programming languages like Python, Scala, Java, and R. While Hadoop is the most powerful tool in big data, its drawbacks include low processing speed and requiring a lot of coding. Apache Spark is a data processing engine that performs mostly the same functions as Hadoop, and supports stream processing, which involves the continuous input and output of data. Hadoop, on the other hand, uses batch processing—collecting data in batches and then processing it in bulk later, which can be less efficient.

- C++. C++ is a relatively simple but powerful programming language for computing large data sets quickly when you don’t have a predefined algorithm. It’s the only programming language where you can process over 1GB of data in a second. You can also retrain the data and apply predictive analytics in real-time, and maintain consistency of the system of record.

- Amazon Web Services/Redshift (for data warehousing). A data warehouse is a relational database designed for query and analysis. It’s designed to provide a long-range view of data over time. A database, by contrast, rapidly updates real-time data. Data engineers must be familiar with the most popular data warehousing applications, including Amazon Web Services and Amazon Redshift. Most data engineer job descriptions specifically list AWS as a requirement.

- Azure. Microsoft’s Azure is a cloud technology that enables data engineers to build large-scale data analytics systems. It automates the setup and support of servers and applications with a packaged analytics system that is easy to deploy. The software contains pre-built services for everything from data storage to advanced machine learning. In fact, Azure is so widely used that some data engineers specialize in it.

- HDFS and Amazon S3. These specialized file systems are used to store data during processing and can store a virtually unlimited amount of data. Since data is stored in the cloud, it can be accessed from anywhere. It has a wide variety of uses besides big data analytics, including storing data for websites, mobile applications, enterprise applications, and IoT devices.

8 Essential Data Engineer Technical Skills

Aside from a strong foundation in software engineering, data engineers need to be literate in programming languages used for statistical modeling and analysis, data warehousing solutions, and building data pipelines.

- Database systems (SQL and NoSQL). SQL is the standard programming language for building and managing relational database systems (tables that consist of rows and columns). NoSQL databases are non-tabular and come in a variety of types depending on their data model, such as a graph or document. Data engineers must know how to manipulate database management systems (DBMS), which is a software application that provides an interface to databases for information storage and retrieval.

- Data warehousing solutions. Data warehouses store huge volumes of current and historical data for query and analysis. This data is ported from numerous sources, such as a CRM system, accounting software, and ERP software. The data is then used by the organization for reporting, analytics, and data mining. Most employers expect entry-level engineers to be familiar with Amazon Web Services (AWS), a cloud services platform with a whole ecosystem of data storage tools. Here is a list of the 12 best data warehousing courses to help you advance your skills.

- ETL tools. ETL (Extract, Transfer, Load) refers to how data is taken (extracted) from a source, converted (transformed) into a format that can be analyzed and stored (loaded) into a data warehouse. This process uses batch processing to help users analyze data relevant to a specific business problem. The ETL pulls data from various sources, applies certain rules to the data according to business requirements, and then loads the transformed data into a database or business intelligence platform so it can be used and viewed by anyone in the organization.

- Machine learning. Machine learning algorithms—also called models—help data scientists make predictions based on current and historical data. Data engineers only need a basic knowledge of machine learning as it enables them to understand a data scientist’s needs better (and, by extension, the organization’s needs), get models into production and build more accurate data pipelines.

- Data APIs. An API is an interface used by software applications to access data. It allows two applications or machines to communicate with each other for a specified task. For example, web applications use API to allow the user-facing front end to communicate with the back-end functionality and data. When a request is made on a website, an API allows the application to read the database, retrieve information from the relevant tables in the database, process the request and return an HTTP-based response to the web template, which is then displayed in the web browser. Data engineers build APIs in databases to enable data scientists and business intelligence analysts to query the data.

- Python, Java, and Scala programming languages. Python is the top programming language used for statistical analysis and modeling. Java is widely used in data architecture frameworks and most of their APIs are designed for Java. Scala is an extension of the Java language that is interoperable with Java as it runs on JVM (a virtual machine that enables a computer to run Java programs).

- Understanding the basics of distributed systems. Hadoop fluency is one of the most important data engineer skills. The Apache Hadoop software library is a framework that allows for the distributed processing of large data sets across clusters of computers using simple programming models. It is designed to scale up from single servers to thousands of machines, each offering local computation and storage. Apache Spark is the most widely used programming tool in data science and is written using the Scala programming language.

- Knowledge of algorithms and data structures. Data engineers focus mostly on data filtering and data optimization, but a basic knowledge of algorithms is helpful for understanding the big picture of the organization’s overall data function, as well as define checkpoints and end goals for the business problem at hand.

Get To Know Other Data Science Students

Melanie Hanna

Data Scientist at Farmer's Fridge

Aaron Pujanandez

Dir. Of Data Science And Analytics at Deep Labs

Mengqin (Cassie) Gong

Data Scientist at Whatsapp

Important Soft Skills for Data Engineers

Data engineers also require a handful of soft skills in their arsenal to perform their job duties.

- Communication skills. On a typical day, data engineers interface with machine learning engineers, data analysts, CTOs, and developers. They may also work with other teams or business units to gather requirements and define the scope of a project. Communication skills are paramount for collaborating effectively. It’s also important for a data engineer to show an understanding of the underlying business problem they are trying to address and articulate how their work will help the bottom line.

- Collaboration. When teams depend on each other for deliverables, they need to have a healthy give-and-take relationship to keep projects running smoothly. Data engineers need to understand the expectations of the teams they’re working with, how frequently they need to be updated, and what their pain points are. Understanding where this work fits in within the overall business helps data engineers be of service to other teams and come up with better ideas to collaborate.

- Presentation skills. Depending on the size of the data science team, data engineers may be expected to perform data analysis and present their findings to stakeholders. Learning effective public speaking and how to explain technical data concepts in the context of solving a business problem will make a data engineer a compelling orator and increase the chances that their recommendations will be acted upon.

Data Engineering Educational Backgrounds

Since data engineering is a relatively new field, so there are no formal data engineer qualifications or an optimal educational background.

- A bachelor’s degree in mathematics, statistics, computer science, or a business-related field is helpful—but not required. All you need is an online bootcamp or course that provides a foundation of advanced statistics and programming languages that can be used to mine and query data, and in some cases, use big data SQL engines.

- More importantly, data engineers are skilled software engineers who understand database architecture and how to build data pipelines. Since it is still relatively hard to find a university curriculum that supports this, a better option is learning yourself via an online bootcamp that specializes in data science or data engineering. This will teach you the main programming languages used by data engineers (Python, R, SQL) as well as machine learning, building data pipelines, and finding data warehousing solutions.

Since you’re here…Are you interested in this career track? Investigate with our free guide to what a data professional actually does. When you’re ready to build a CV that will make hiring managers melt, join our Data Science Bootcamp which will help you land a job or your tuition back!